In 1911, Frederick Winslow Taylor stood on a factory floor with a stopwatch, timing how long it took a worker to shovel coal. His insight cut deeper than efficiency, it was about where judgment lived. Before Taylor, craft knowledge lived inside the worker: how to grip the shovel, how much to load, when to rest. Taylor extracted that knowledge, encoded it into the system, and removed the worker's discretion from the equation entirely.

Within a generation, his "scientific management" had reorganized American industry. Work became measurable, delegatable, and scalable. The skilled craftsman became a repetitive laborer. The system became permanent. The individual became replaceable.

Over the last century, knowledge work became the dominant form of human labor. And we've built remarkable tools to support it: search engines, dashboards, project management software. But we never systematized it. We gave knowledge workers better shovels but the judgment stayed inside the human. The manager still decides what gets prioritized. The engineer still decides what gets built. Work still flows through human brains, one decision at a time.

AI changes the nature of that constraint. For the first time, it's possible to extract cognitive judgment from individual humans and encode it into Systems of Action. That's not an improvement on existing tools. It's the Taylor moment for knowledge work. And like Taylor's moment, it will be enormously productive and deeply disruptive in equal measure.

We’ve Just Crossed a Threshold

Something shifted in AI in the last three months. For most of AI's recent history, even the most capable models were fundamentally reactive. They responded, suggested, and waited. The human initiated every action, and the AI served it.

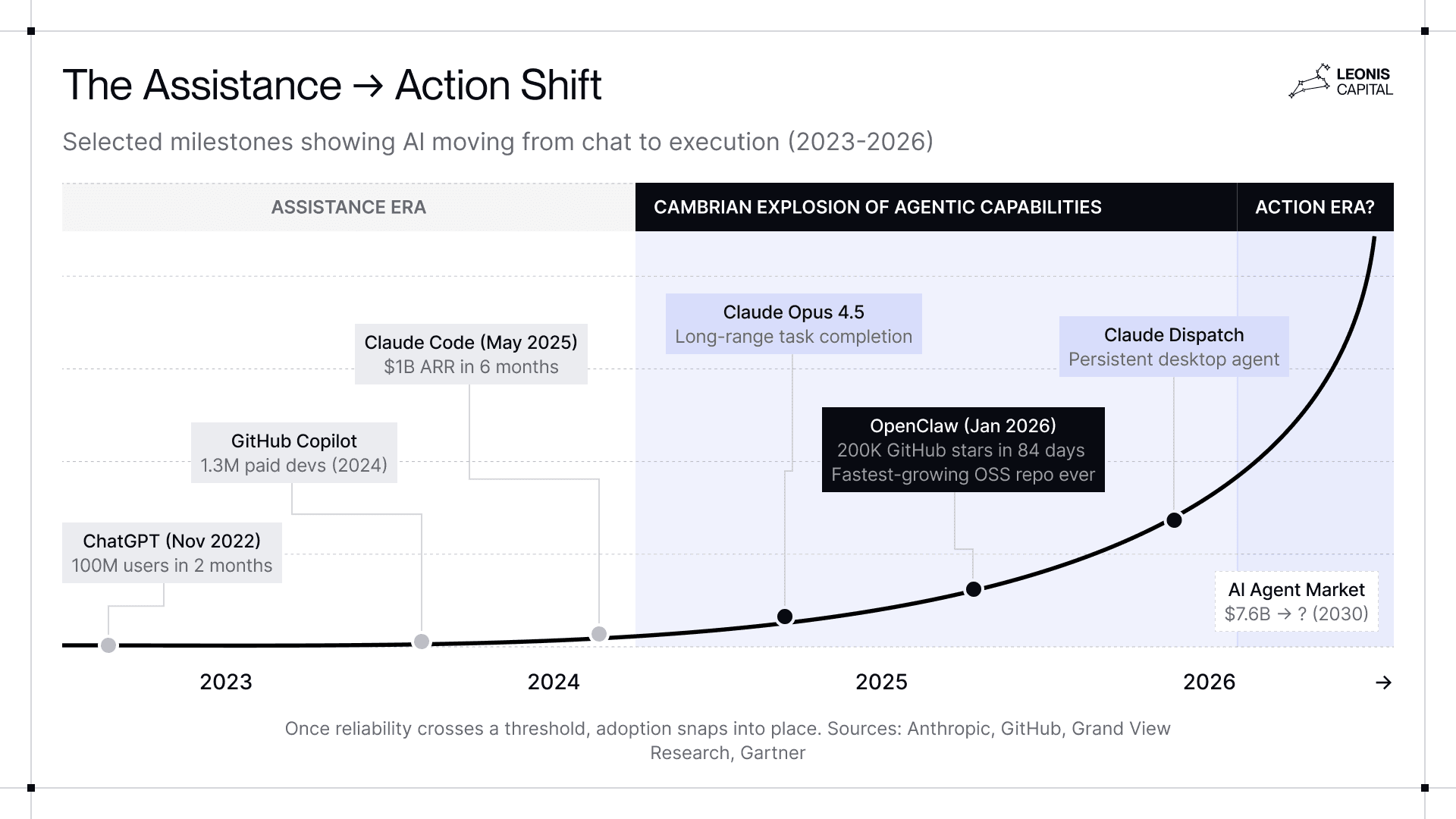

Since late 2025, a series of things has happened rapidly. First, Claude Opus 4.5 crossed a threshold where engineers can hand off genuinely long-range tasks and come back to find entire features that work. Then we saw the takeoff of OpenClaw, which can take actions across systems and execute workflows that would have previously required a human in the loop. And finally, Claude Dispatch arrived as Anthropic's polished countermove against OpenClaw, turning your phone into a remote control for a persistent desktop agent.

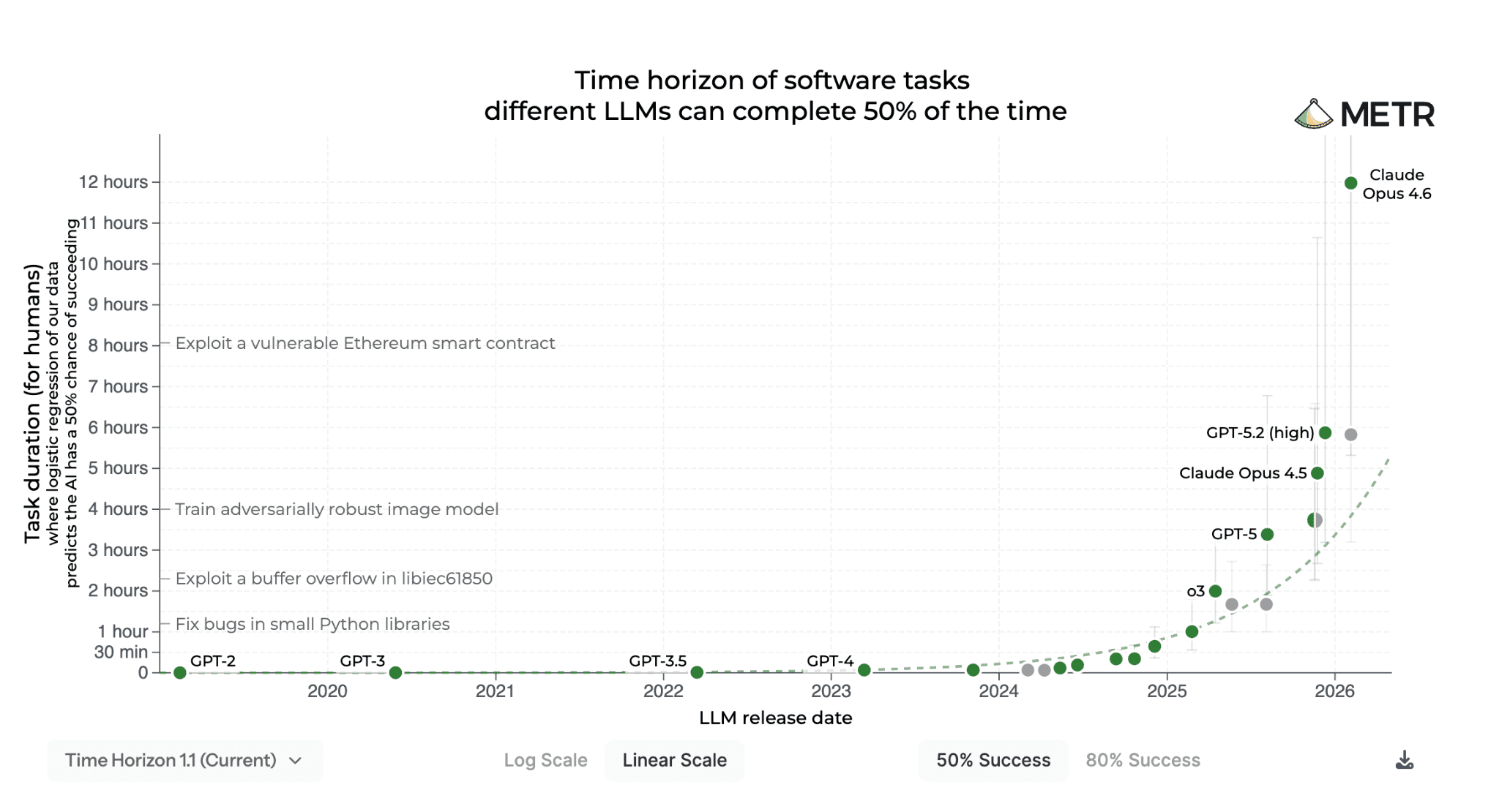

Since GPT-2, AI’s ability to handle continuous tasks has been doubling every 7 months. At the end of 2025, we finally saw a length long enough to make it feasible for AI to own entire processes rather than just to assist.

We’re seeing startups building AI systems that manage their entire engineering teams: checking in on progress, documenting decisions, maintaining context across sprints, and flagging blockers. The engineers don’t manage the system, the system manages the engineers.

None of what we see today is a full System of Action yet. They're early, constrained, and they still fail in ways that matter. But they are the first credible evidence of the emergence of such a system. These systems are starting to make reasonable decisions on their own, and companies are starting to reorganize themselves around it. Taylor would have recognized it immediately.

Behind Action, There's Judgment

When most people hear "Systems of Action,” they think about the action. The autonomous execution, the AI agents running workflows, the software doing things without being asked. That's the visible part, but it's not the most important part.

The most important part is what makes the action possible: judgment. And to understand why that matters, it helps to be precise about what a System of Action actually is and what it isn't.

Most software built over the last three decades falls into one of two categories. Systems of Record (Salesforce, Workday, SAP) store and retrieve the artifacts of decisions like the contract, the headcount, the transaction. Systems of Engagement (email, Slack, Zoom) facilitate the human communication through which decisions get made. Both are built on the same underlying assumption that humans hold the judgment, and software serves it.

Even the most sophisticated AI tools in the last few years don’t challenge that assumption. A copilot, as the name suggests, requires a human to decide. A computer-use agent executes, while a human specifies the rules and goals. The software remains a vehicle for human judgment. It never holds any of its own.

A System of Action is different in one precise way: judgment is encoded into the system itself. It doesn't wait for a human to tell it what to do exactly. It holds a mandate and acts within it autonomously, escalating only when uncertainty or stakes exceed what it's been trusted to handle.

The simplest definition of this system is software that makes and executes decisions on behalf of an organization, rather than software that helps humans with their work.

That distinction sounds subtle. It isn't. When judgment moves from the human into the system, everything changes.

Think of it like a smart new employee. They come in, learn how the organization works, understand its priorities and risk tolerances, and start taking things off people's plates without needing to ask for step-by-step instructions. The difference between a human worker and a System of Action is that the latter never leaves, never forgets, and gets better the longer it's there.

Most people stop the analogy there. They're biased by what AI can do today, so they imagine the new employee staying in the entry-level role indefinitely. But that's not how this plays out. The new employee becomes the manager. The manager becomes the CEO. The System of Action that starts by handling workflows ends up governing the organization that runs on top of it.

That’s why the judgment position is the most valuable position in the AI era.

The Great Scarcity Flip

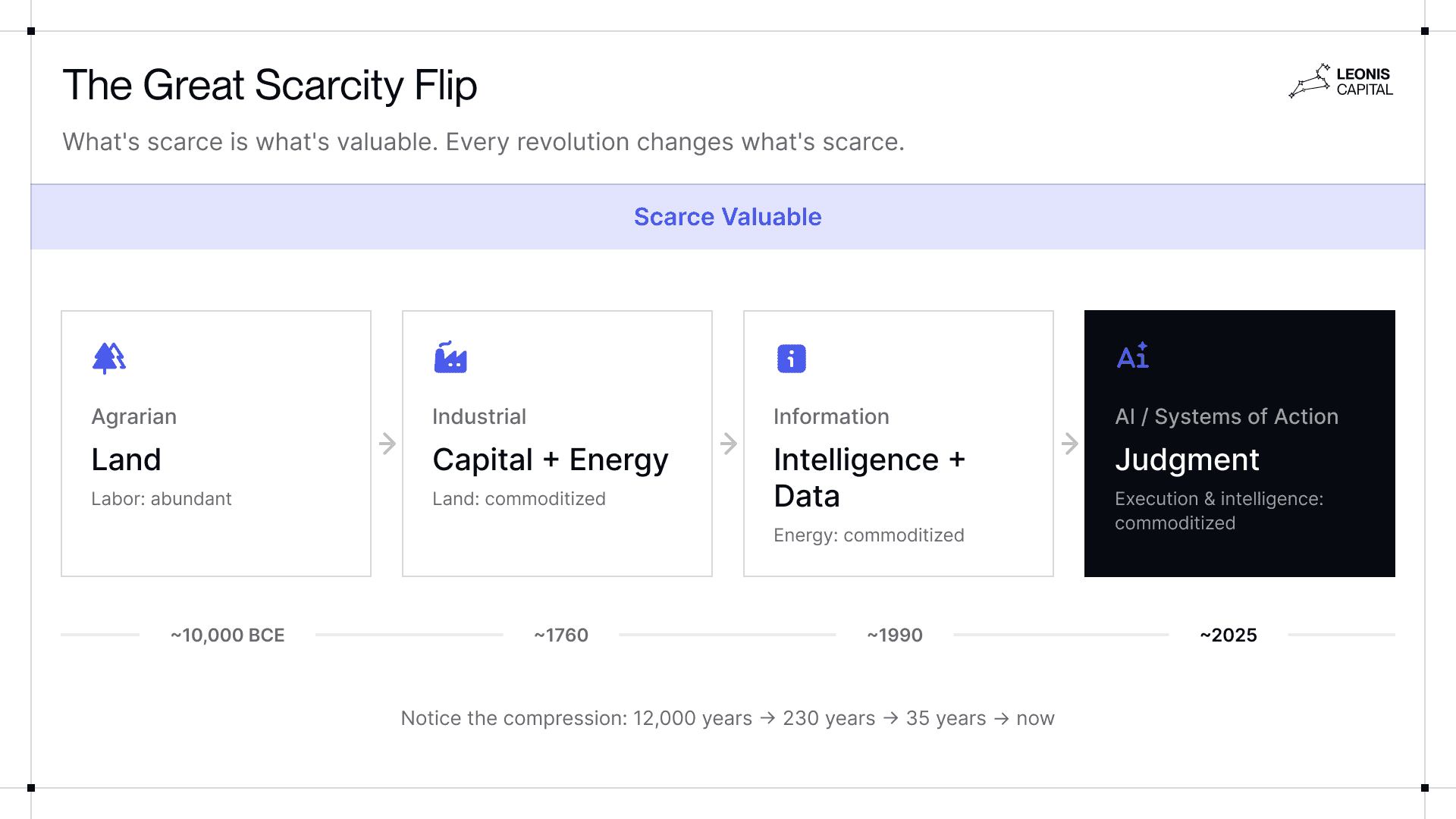

Historically, every technological revolution changes what's scarce, and scarcity is what determines value. In agrarian economies, land was the constraint. In industrial economies, capital and energy were the constraints. In the information age, human intelligence was the constraint.

AI flips that constraint. It makes intelligence a commodity. Any company can now access models that write, code, analyze, and reason at a level that would have seemed extraordinary three years ago. But what ensued was a wave of "AI slop,” mostly generic websites, meaningless blog posts, and low-quality code that polluted codebases.

When everyone has access to the same intelligence, the scarce thing becomes judgment.

That's why the battleground has shifted. Every serious AI company is now wrestling with the same problem, how do you build an AI system with good "taste”? Any system can execute, but the ones that become Systems of Action are the ones with the best judgment.

We've seen this pattern before. Just as every SaaS company eventually wanted to become the System of Record, every serious AI company now wants to become the System of Action. Systems of Record made data portable and persistent. Systems of Engagement made communication faster and searchable. Systems of Action make cognitive execution scalable. Each category became infrastructure. Each created companies worth hundreds of billions of dollars.

Unlike intelligence, judgment compounds. A smarter model can always be replaced by a slightly smarter one. But a system that has developed genuine judgment about how a specific organization operates gets harder to replace over time.

Replacing a System of Record is painful. Replacing a System of Action is organizational amnesia. You're not just switching software, you're losing the accumulated judgment of every decision the system has made on your behalf. Companies that own the judgment layer will be irreplaceable.

Why Everyone Will Work for the System of Action

This doesn't mean humans disappear, at least not yet. But it does mean that not all humans survive the transition equally.

Taylorism produced two types of workers. The manager who designed and perfected the system, encoded the rules, set the constraints, and refined the process. And the laborers who executed within it, doing the physical tasks the machines couldn't yet handle.

Systems of Action will produce the same split. The pure executors get replaced first. What remains are two groups. The first are people doing things AI can’t do yet, mostly physical tasks or tasks that require human presence. The second are the "judgment donors,” a smaller and more elite group whose job is to feed the system the one thing it still needs: judgment. Things like original taste, novel context, and strategic direction, that it hasn’t yet absorbed. Judgment donors aren’t safe either. Every decision they make, every instinct they apply, every edge case they navigate becomes training data.

And just as Taylorism extracted the craft of skilled workers and encoded it into the system until the craft itself became standardized, Systems of Action will do the same for cognitive work. It will create the intellectual equivalent of an assembly line.

Taylor didn't just describe how work was done. He reshaped work to fit what machines could do, creating assembly lines that required tasks to be broken down into repeatable steps to allow the machine to operate efficiently. In systems of action, work will be broken into inputs the AI can process, outputs it can evaluate, and decisions it can learn from. The knowledge worker who resists that standardization will find themselves increasingly peripheral. The one who embraces it will find themselves increasingly replaceable.

This is already visible in software engineering. Two years ago, AI handled boilerplate jobs, then it handled implementation, and now it builds entire features. Each wave absorbed the previous level of human skill and pushed the bar higher for human performance. The engineers who survived each transition were the ones who could operate at the next level up. But the next level up keeps moving. There is no permanent floor. The better you are at your job, the more useful you are as a training signal, and the faster you make yourself unnecessary.

The modern factory doesn't run on craftsmen. It runs on scientific management systems. The AI-native organization runs on Systems of Action. The system is the principal. Humans are the resources it deploys. The only people with durable value are those operating genuinely outside the system's knowledge base, with original insight it hasn't yet seen and can't yet replicate.

That bar keeps rising. And unlike the industrial transition, which played out over generations, this one is moving faster than most people can keep up with.

After the Stopwatch

So are we screwed? Taylor’s workers asked the same question. If you've been reading carefully, it sounds like it. Judgment gets extracted, encoded, compounded. The better you are, the faster you make yourself unnecessary. No permanent floor.

That's the same conclusion people reached at every prior inflection point. It was wrong every time. Not because the disruption wasn't real, but because people consistently underestimate the new economic order that follows.

Every technological revolution commoditizes one form of value and unleashes a wave of new value around whatever is still scarce. The printing press destroyed scribes and created an entire literate civilization. Quartz watches wiped out two-thirds of Swiss watchmakers and gave birth to a $30 billion luxury industry built on taste and meaning. American agriculture went from 41% of the workforce to 2% and unlocked the entire modern services economy. Taylor’s scientific management displaced skilled craftsmen and created the modern corporation. The new roles never had names yet, which is exactly why nobody inside the disruption could see them coming.

AI will be no different, except that the disruption is moving faster than any previous cycle, which makes the new roles even harder to imagine. The categories of work that Systems of Action create will be as foreign to us now as "software engineer" would have been to a factory foreman in 1920.

So no, we're not screwed (yet). But we are in the painful part. And the question isn't whether Systems of Action reshape the economy. It's which companies earn the right to become the systems that the next economy runs on.

The Cambrian explosion is starting. We’ll cover that in Part 2.